Signal is working on a standalone version of its desktop app that does not require a smartphone. Signal Desktop will also gain additional options when used as a linked device.

Signal’s end to end encrypted, yes… But we do the key exchange process through Signal’s servers, don’t we? How do we know they don’t store copies of the keys? Does the client have a mechanism in place to make sure the man in the middle doesn’t do anything funny? I haven’t actually delved very deep into the code, but it sounds like I should.

And… Sure, their server code may be open source too, but nobody guarantees that that’s the code actually running on their servers.

Here is an overview of all audits done on Signal: https://community.signalusers.org/t/overview-of-third-party-security-audits/13243

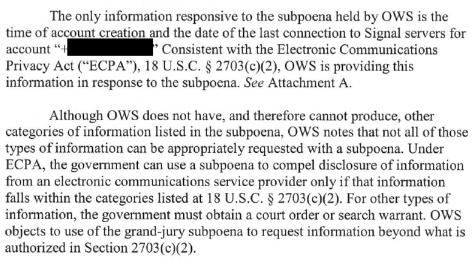

No recent server audits really. They are pretty public about information requests made by the government and their responses though, and from what I can see the only pieces of information they have shared with the government to this point are the time of account creation and the date of the last connection to Signal servers:

You can check more of their responses from here: https://signal.org/bigbrother/

My thinking also. Corps/gov can also link identity to emails of course, but it’s much harder due to email aliasing and the ease of new account creation.

Mobile phone numbers are far more personal - people generally only have one or two max and generally keep them for very long periods.

If its the true reason, it would make Signal not much better than Meta’s WhatsApp, which gleans value from its users by noting metadata tying users to one-another by who they contact, when, and how often to extrapolate social circles and relationships. But Meta goes further in I think also tracking location, etc, and obviously has much more personal data in the linked phone numbers of many FB/Insta accounts… Signal could potentially be doing some of that to a degree with IP geolocation… Not great.

TLDR: its the one thing stopping me from trusting Signal entirely as a benevolent actor - they want and ‘need’ your personal phone number. I use it still, as its the best available mainstream option, but I mention this concern when recommending it to those seeking privacy.

They will also likely require a captcha done using webview, exposing many hardware identifiers to Google.

They will once again claim this is to protect against spammers and not to track people.

Signal is obviously a honeypot, as it never would have taken this long to release a desktop App not linked to a phone if it weren’t a honeypot, and they still are requiring a phone number. Any “privacy” company that adopts real privacy features only after huge pushback from the privacy community years later is likely a honeypot. Look at “tuta,” which a former government worker testified under oath is a honeypot: no accepting of Monero ever. Finally, when called a honeypot, they make a deal with a third party to accept Monero.

Signal is probably releasing this because whoever owns the honeypot wants to link desktops to phone numbers, allowing the desktops to be hacked with something like Pegasus as well.

Does anyone remember that accidental LinkedIn post showing all the Apps that one company was able to hack? And it showed WhatsApp, Signal, Telegram, etc… And it was always based on a phone? And this photo was accidentally posted online before being scrubbed? I forget the details on it and if anyone remembers, please post about it to jog my memory. Well, now perhaps that group wants to hack laptops too.

I don’t believe this is anything other than more bullshit. It wouldn’t surprise me if they also blocked VOIP for registration. Fuck Signal.

They want to be able to link accounts to your identity, obviously

You know, I’ve been thinking…

Signal’s end to end encrypted, yes… But we do the key exchange process through Signal’s servers, don’t we? How do we know they don’t store copies of the keys? Does the client have a mechanism in place to make sure the man in the middle doesn’t do anything funny? I haven’t actually delved very deep into the code, but it sounds like I should.

And… Sure, their server code may be open source too, but nobody guarantees that that’s the code actually running on their servers.

They ship their all with blobs, so we cannot verify what their app is doing.

Here is an overview of all audits done on Signal:

https://community.signalusers.org/t/overview-of-third-party-security-audits/13243

No recent server audits really. They are pretty public about information requests made by the government and their responses though, and from what I can see the only pieces of information they have shared with the government to this point are the time of account creation and the date of the last connection to Signal servers:

You can check more of their responses from here:

https://signal.org/bigbrother/

My thinking also. Corps/gov can also link identity to emails of course, but it’s much harder due to email aliasing and the ease of new account creation.

Mobile phone numbers are far more personal - people generally only have one or two max and generally keep them for very long periods.

If its the true reason, it would make Signal not much better than Meta’s WhatsApp, which gleans value from its users by noting metadata tying users to one-another by who they contact, when, and how often to extrapolate social circles and relationships. But Meta goes further in I think also tracking location, etc, and obviously has much more personal data in the linked phone numbers of many FB/Insta accounts… Signal could potentially be doing some of that to a degree with IP geolocation… Not great.

TLDR: its the one thing stopping me from trusting Signal entirely as a benevolent actor - they want and ‘need’ your personal phone number. I use it still, as its the best available mainstream option, but I mention this concern when recommending it to those seeking privacy.

For me its the blobs. We can’t trust anything they do so long as the code they ship isn’t 100% open source

They will also likely require a captcha done using webview, exposing many hardware identifiers to Google.

They will once again claim this is to protect against spammers and not to track people.

Signal is obviously a honeypot, as it never would have taken this long to release a desktop App not linked to a phone if it weren’t a honeypot, and they still are requiring a phone number. Any “privacy” company that adopts real privacy features only after huge pushback from the privacy community years later is likely a honeypot. Look at “tuta,” which a former government worker testified under oath is a honeypot: no accepting of Monero ever. Finally, when called a honeypot, they make a deal with a third party to accept Monero.

Signal is probably releasing this because whoever owns the honeypot wants to link desktops to phone numbers, allowing the desktops to be hacked with something like Pegasus as well.

Does anyone remember that accidental LinkedIn post showing all the Apps that one company was able to hack? And it showed WhatsApp, Signal, Telegram, etc… And it was always based on a phone? And this photo was accidentally posted online before being scrubbed? I forget the details on it and if anyone remembers, please post about it to jog my memory. Well, now perhaps that group wants to hack laptops too.

I don’t believe this is anything other than more bullshit. It wouldn’t surprise me if they also blocked VOIP for registration. Fuck Signal.

Extraordinary claims require extraordinary evidence.